TL;DR

- Multi cloud data pipelines offer flexibility, redundancy, and cost optimization but add complexity in terms of orchestration, governance, and monitoring.

- Key architectural layers include ingestion, storage, transformation, orchestration, observability, and governance.

- Tools like DBT and Airflow are critical for managing transformations and orchestration in multi cloud environments.

- The estimated monthly cost for a mid-size enterprise’s multi cloud pipeline can range from $30K to $50K, depending on data volume and infrastructure needs.

- A 3-phase approach (Foundation, Transformation, and Reliability & Governance) is recommended for building a production-grade multi cloud pipeline.

Your Pipeline Just Failed. Again.

It’s 3 AM, and your phone just won’t stop buzzing. Your data pipeline has failed again! The root cause? A schema change in AWS that broke your GCP transformations, and your on-call engineer is debugging cross-cloud authentication while your CFO is emailing about why the data warehouse bill jumped 40% in this quarter.

Welcome to multi-cloud data engineering.

Here’s what the vendor case studies don’t tell you: Multi cloud data pipelines are exponentially harder than single-cloud, and the challenges aren’t just technical, but they’re organizational, financial, and operational.

But the numbers tell a compelling story. According to Gartner’s November 2024 forecast, 89% of enterprises have embraced multi-cloud strategies, and 90% of organizations will adopt hybrid cloud approaches through 2027. Public cloud spending hit $723.4 billion in 2025, which grew 21.5% year-over-year.

This guide provides a practical framework for building reliable, cost-effective multi cloud data pipelines, which are drawn from Innovatics’ implementations across finance, retail, manufacturing, and pharma. You’ll learn:

- Why multi-cloud (and when single-cloud makes more sense)

- The 6 architectural layers that matter

- How DBT, Airflow, and cloud-native tools fit together

- Real cost breakdown: $30-50K/month for mid-size enterprises

- The 3-phase implementation sequence we use

- Testing, observability, and governance at scale

- Seven failures we’ve seen (and fixed)

Let’s cut through the vendor marketing and talk about what actually works.

Why Multi Cloud Data Pipelines Are Rising (And Why They’re Harder Than You Think)

Multi-cloud isn’t a technology choice; it’s a business strategy, but it’s not always the right strategy.

The Honest Business Case

1. Cost Optimization (When Done Right)

Leverage pricing differences: AWS spot instances versus GCP sustained use discounts, and strategic data placement avoids egress fees that can quietly become your largest line time.

Real savings potential: 20-35% cost reduction compared to single-cloud deployments.

Reality check: Those savings evaporate if you don’t actively manage complexity. Data transfer costs are often the forgotten budget killer, and can account for 10-20% of your total cloud spend.

2. Redundancy & Resilience

No single point of failure. Geographic disaster recovery. SLA improvements from 99.95% to 99.99%.

But here’s the catch: Your orchestration layer becomes the new single point of failure if not architected correctly, and according to the State of Airflow 2025 report, 72% of companies noted significant effects on internal systems, team productivity, and even revenue from pipeline disruptions.

3. Best-of-Breed Flexibility

AWS for compute, GCP for ML, Azure for enterprise integration. This is the promise: avoid vendor lock-in while cherry-picking the best tools.

72% of enterprises cite vendor lock-in avoidance as their primary multi-cloud driver, but you’re trading vendor lock-in for complexity lock-in, and that has real costs in team expertise, tooling, and operational overhead.

4. Compliance & Data Sovereignty

GPR, CCPA, NCUA, and APRA regulations demand data residency, and financial data must stay in specific regions. Healthcare data requires a HIPAA-compliant infrastructure. Now, multi-cloud gives you geographic flexibility to meet these ends meet.

But governance becomes exponentially harder when you’re managing policies across AWS IAM, Azure RBAC, and GCP Cloud IAM simultaneously.

The Honest Challenges

| Benefit | Real Cost |

|---|---|

| Cost optimization | Complexity tax |

| Redundancy | Orchestration overhead |

| Flexibility | Skills gap |

| Compliance | Governance fragmentation |

When Single-Cloud Makes Sense

If you’re processing less than 10TB monthly, have minimal compliance needs, and deep expertise in one cloud, stay single-cloud. Multi-cloud for its own sake is over-engineering.

Multi-cloud is for enterprises with large data volumes (10TB+ monthly), specific compliance requirements, disaster recovery mandates, or strategic vendor diversification needs. Otherwise, you’re building infrastructure you don’t need.

The 6 Layers of Modern Data Engineering Architecture

Every reliable multi cloud pipeline has the same 6 layers. The tools change, but the architecture pattern doesn’t.

Here’s the blueprint we use at Innovatics across finance, retail, and pharma clients:

Layer 1: Data Ingestion

Getting data in: batch or streaming.

Batch Ingestion:

- Cloud-native: AWS Glue, Azure Data Factory, Google Cloud Batch

- Open-source: Airbyte, Fivetran (400+ connectors)

- Custom: Python/Spark when you need control

Streaming Ingestion:

- Message queues: Kafka, Kinesis, Event Hubs, Pub/Sub

- Change Data Capture: Debezium, AWS DMS for database changes

- Real-time APIs

Multi-Cloud Reality:

Different APIs and authentication per cloud, and your abstraction layer, and usually Airflow operators or unified connectors-prevents vendor lock-in.

Innovatics Approach:

NexML automates ingestion pipeline creation. iERA extracts structured data from documents (PDFs, invoices, medical records) that traditional tools miss.

Layer 2: Storage

Where data lives: lakes or warehouses

Data Lakes:

S3, ADLS Gen2, GCS plus table formats (Delta Lake, Iceberg) for ACID transactions.

Data Warehouses:

- Cloud-agnostic: Snowflake, Databricks

- oud-native: Redshift, Synapse, BigQuery

Multi-Cloud Challenge:

Data gravity and moving terabytes between clouds costs thousands monthly. Example: moving 5TB daily between AWS and GCP without optimization = $75K/month in egress fees.

Solution:

Process data where it lives. Replicate only what’s necessary

Real example from our payment gateway client: Transaction data stays in AWS (where it originates), with only aggregated summaries replicated to GCP BigQuery for analysis. Monthly savings: $8K in avoided egress fees.

Layer 3: Transformation

Making data useful: business logic, aggregations, feature engineering.

DBT (Analytics Engineering):

- SQL-first transformations

- Version control, testing, documentation built-in

- Works with Snowflake, BigQuery, Redshift, Synapse, Databricks

- 90,000 projects in production, $100M+ ARR (October 2025)

Spark (Scale + Complexity):

- Databricks, EMR, Dataproc

- For large-scale transformations (1TB+ daily)

Multi-Cloud Approach:

DBT on cloud-agnostic Snowflake. Spark containerized with consistent configs across clouds.

Innovatics Differentiation:

NexML provides AutoML feature engineering and automatic transformation for ML-ready datasets without manual coding.

Layer 4: Orchestration

The conductor is coordinating everything.

Apache Airflow:

Dominates with 320 million downloads in 2024—10x more than its nearest competitor. 77,000+ organizations using it, with 95% citing it as critical to their business.

- Python DAGs, extensible

- Managed: AWS MWAA, Cloud Composer, Astronomer

Modern Alternatives:

- Prefect: Easier dev experience

- Dagster: Software-defined, testing-first

Multi-Cloud Role:

Airflow orchestrates workflows across all clouds with cloud-specific operators.

Decision Framework:

- Airflow if: Existing expertise, complex workflows

- Prefect if: Greenfield project, faster development

- Dagster if: Software engineering team, test-driven culture

Layer 5: Observability

Monitoring, logging, and alerting.

What to Track:

- Data freshness (SLA compliance)

- Row count anomalies (±20% from baseline)

- Schema changes (breaking versus non-breaking)

- Pipeline execution time

- Cost per run

Tools:

- Data-specific: Monte Carlo, Great Expectations

- Infrastructure: Datadog, Grafana + Prometheus

- Custom: CloudWatch, Stackdriver

Multi-Cloud Challenge:

Fragmented monitoring across platforms.

Solution:

Centralized observability dashboard aggregating metrics from all clouds.

Layer 6: Governance

Access control, lineage, compliance.

Data Lineage:

- OpenLineage (open standard)

- Cloud-native: Glue Catalog, Purview, Data Catalog

Access Control:

- Cloud IAM + centralized policy engine

- Column-level security for PII

- Audit logging for compliance

Compliance:

- Automated PII detection

- Retention enforcement

- Regulatory reporting

Multi-Cloud Complexity:

Different governance tools per cloud.

Solution:

Universal policies (Terraform) translated to cloud-specific controls.

Navigating the Multi-Cloud Tool Landscape

The modern data stack has 100+ tools. Here’s what actually matters for multi-cloud pipelines and how to choose.

DBT: The Analytics Engineer’s Best Friend

- What it solves: SQL-based transformations with version control, testing, and auto-generated documentation.

- When to use: Analytics use cases, SQL-savvy teams, data mart creation.

- Multi-cloud fit: Works with Snowflake (cloud-agnostic), BigQuery, Redshift, Synapse, Databricks.

- Cost:Free (open-source) or $100-500/seat/month (dbt Cloud).

- Real outcome:Teams report 50-70% faster deployment with built-in quality checks. DBT has seen 85% year-over-year growth in Fortune 500 adoption.

Orchestration: Airflow vs Prefect vs Dagster

| Criterion | Airflow | Prefect | Dagster |

|---|---|---|---|

| Best for | Established teams | New projects | Software engineers |

| Learning curve | Steep | Moderate | Moderate |

| Testing | Add-on (pytest) | Built-in | Built-in |

| UI | Basic | Modern | Modern |

| Community | Massive | Growing | Growing |

| Cost | Free OSS + hosting | Free + Cloud tiers | Free OSS + hosting |

Recommendation:

- Airflow if you have existing expertise or need the extensive connector ecosystem

- Prefect for faster development and better developer experience

- Dagster for test-driven engineering culture

Cloud-Native ETL: When to Use

AWS Glue:

- Serverless Spark-based

- Auto schema discovery

- Best for: AWS-centric with Spark needs

- Cost: $0.44/DPU-hour

Azure Data Factory:

- Visual, low-code

- 90+ native connectors

- Best for: Microsoft ecosystem, hybrid cloud

- Cost: $1.50/1,000 executions

Google Dataflow:

- Apache Beam runtime

- Unified batch + streaming

- Best for: Real-time + ML on GCP

- Cost: $0.041/vCPU-hour

The Multi-Cloud Reality

Most enterprises use hybrid approaches:

- Airflow orchestrates across clouds

- Cloud-native for ingestion within each

- Neutral platforms (Snowflake, Databricks) for transformation

Real example: Our manufacturing client uses Azure Data Factory (on-prem SAP integration) → Airflow (cross-cloud orchestration) → NexML (ML pipelines) → PowerBI (dashboards). Hybrid is the norm, not the exception.

Data Integration: Fivetran vs Airbyte

Fivetran:

- 400+ pre-built connectors

- Fully managed

- Cost: $1-2 per million rows

Airbyte:

- Open-source Fivetran alternative

- Self-hosted or cloud

- Cost-effective at scale

Decision:

Fivetran for speed-to-value. Airbyte for cost optimization at high volume (50M+ rows monthly).

Best Practices for Reliability at Scale

Reliable pipelines don’t happen by accident. They’re engineered with testing, versioning, monitoring, and failure handling built in from day one.

Here’s our framework from 50+ pipeline implementations:

Practice 1: Comprehensive Testing

Data Quality Tests (Great Expectations):

# Example validations

expect_column_values_to_not_be_null("customer_id")

expect_column_values_to_be_between("age", 0, 120)

expect_table_row_count_to_equal_other_table("source", "target")

Unit Tests:

Test transformation logic independently with mocked data sources.

Integration Tests:

End-to-end validation with production-like data volumes and cross-cloud connectivity.

Regression Tests:

Compare outputs over time to detect schema drift and monitor data distributions.

What You Need:

- Automated test suites in CI/CD (GitHub Actions, GitLab CI)

- Validation at each pipeline stage

- Rollback procedures when tests fail

Real Impact:

A financial services client caught a schema change 2 hours before production deployment. Rollback prevented an estimated $200K in incorrect trades.

Practice 2: Data Lineage

Why It Matters:

- Impact analysis: “What breaks if we change this table?”

- Regulatory compliance (SOC2, GDPR audits)

- Debugging data quality issues

- Knowledge transfer when engineers leave

Implementation Options:

OpenLineage (Recommended):

- Open standard supported by Airflow, Spark, DBT

- Vendor-neutral

- Growing ecosystem

Cloud-Native:

- AWS Glue Data Catalog

- Azure Purview

- GCP Data Catalog

Commercial:

- Atlan, Collibra (enterprise features)

Innovatics Approach:

Auto-generate lineage from code. Visual dependency graphs show source → transformation → destination at each stage.

Real example: Our pharma client needed an audit trail for sample distribution. Lineage tracked from warehouse → forecast model → distribution plan. They passed the regulatory audit on the first try.

Practice 3: Pipeline Versioning

Git-Based Workflow:

pipelines/

├── production/

│ ├── v1.2/

│ │ ├── ingestion/

│ │ ├── transformation/

│ │ └── dbt_models/

│ └── v1.3/ (new logic)

├── staging/

└── rollback_procedures/

Best Practices:

- All pipeline code in version control (no manual changes)

- Feature branches for changes

- Code review before merge

- Tagged releases

- Blue-green deployments (run new version parallel, compare outputs)

Real Benefit:

Our retail client rolled back the demand forecast model when v2.0 showed 15% higher error rate. Zero business impact.

Practice 4: SLA Monitoring

Define Clear SLAs:

- Freshness: “Customer data updated within 15 minutes”

- Completeness: “99.9% of records processed”

- Accuracy: “Zero critical business rule violations”

- Availability: “99.5% pipeline uptime”

Monitoring Stack:

Metrics Collection (CloudWatch, Stackdriver, custom)

Storage (Prometheus, InfluxDB)

Visualization (Grafana, Datadog)

Alerting (PagerDuty, Slack, email)

Incident Response (Runbooks, automated remediation)

Key Metrics:

- Pipeline execution time (baseline: 45min, alert if >60min)

- Row counts (expected: 1M ± 50K daily)

- Data arrival time (SLA: 30min after source update)

- Error rates by stage (alert if >0.1%)

- Cost per pipeline run (track drift)

Practice 5: Failure Handling

Idempotency:

Re-running the same pipeline produces identical results. No duplicate records. Safe retries.

Retry Strategies:

# Exponential backoff

@task(retries=3, retry_delay=timedelta(minutes=5))

def extract_data():

# Airflow handles exponential backoff automatically

Dead Letter Queues (DLQ):

Failed records go to DLQ for separate investigation. Don’t block the pipeline for edge cases.

Circuit Breakers:

Stop the pipeline if the error rate exceeds 5%. Prevent cascading failures. Alert humans for investigation.

Real Failure Scenario:

API rate limit hit during ingestion. The circuit breaker stopped the pipeline. Alert sent. The engineer investigated. Root cause: API usage spike from new integration. Solution: Rate limiting + retry logic. Prevented downstream corruption.

Ensuring Observability and Governance at Scale

Monitoring tells you if your pipeline is working. Observability tells you why it’s broken. In multi-cloud environments, this distinction matters.

The Three Pillars of Observability

1. Metrics (Quantitative)

Pipeline execution time, row counts processed, error rates, resource utilization, cost per run.

2. Logs (Qualitative)

Detailed event records, error stack traces, debugging context, and audit trails.

3. Traces (Flow)

Request path across systems, performance bottlenecks, dependency mapping, cross-cloud latency.

Data-Specific Observability

- Freshness Monitoring:

SELECT table_name, MAX(updated_at) as last_update, CURRENT_TIMESTAMP - MAX(updated_at) as staleness FROM data_catalog WHERE staleness > INTERVAL '1 hour' - Volume Anomaly Detection: Track daily row counts. Use statistical thresholds (±2 standard deviations). Alert on unexpected spikes or drops.

Example: Our retail client’s POS data normally shows 500K-550K rows daily. Alert triggered at 320K rows. Investigation found store network outage affecting 15 locations. - Distribution Shift Monitoring: Track statistical distributions (mean, median, percentiles). Detect data quality degradation. Flag unexpected patterns.

Example: Finance client’s transaction amounts showed distribution shift. Investigation found new merchant category with different price points. Prevented ML model degradation by retraining before deployment.

Multi-Cloud Governance Framework

The Challenge:

Each cloud has different tools:

- AWS: Lake Formation, IAM, CloudTrail

- Azure: Purview, RBAC, Policy

- GCP: Cloud IAM, Data Catalog, Audit Logs

The Solution: Layered Approach

- Layer 1: Universal Policies (Cloud-Agnostic) Data classification rules (PII, PHI, confidential, public). Retention policies by classification. Access principles (least privilege, just-in-time). Compliance requirements (GDPR, CCPA, HIPAA).

- Layer 2: Cloud-Specific Implementation Terraform/IaC translates policies to cloud-native controls. Automated provisioning. Regular compliance audits.

- Layer 3: Cross-Cloud Orchestration Central governance dashboard. Unified access request workflows. Consolidated compliance reporting.

Key Governance Components

- 1. Data Classification Auto-detect PII using ML and regex patterns, tag data assets at ingestion, and enforce handling policies automatically.

- 2. Access Control RBAC (Role-based access). ABAC (Attribute-based for fine-grained control). Just-in-time access with temporary elevated permissions. Approval workflows for sensitive data.

- 3. Compliance Automation GDPR right to deletion (automated data purge). Audit trail generation. Retention enforcement. Regulatory reporting.

Real Example: Financial Services Multi-Cloud Governance

Challenge:

NCUA (US) and APRA (Australia) compliance across AWS and Azure.

Solution:

- Central policy engine (Terraform)

- Automated PII detection and masking

- Cross-cloud audit logging to central SIEM

- 90-day compliance reports

Outcome:

Passed regulatory audits in both jurisdictions. Reduced manual governance work by 60%.

Innovatics in Action: Multi Cloud Pipelines at Scale

Theory is easy, but production is hard. Here are three real multi cloud pipelines we’ve built, which are challenges, architecture, and outcomes.

Example 1: Payment Gateway Cash Flow Prediction

Challenge:

Real-time cash flow visibility across payment channels for proactive liquidity management.

Multi-Cloud Architecture:

Payment APIs (AWS) → Kinesis Streaming (AWS)

BigQuery Data Warehouse (GCP)

NexML Forecasting Models (Azure ML)

PowerBI Dashboards (Azure)

Why Multi-Cloud?

- Payment data originated in AWS (existing infrastructure)

- GCP BigQuery for cost-effective analytics at petabyte scale

- Azure for ML (existing team expertise) and BI (enterprise standard)

Technology Stack:

- Ingestion: AWS Kinesis

- ETL: Python + Airflow

- Storage: Google BigQuery

- ML: NexML (AutoML platform)

- Visualization: PowerBI

Outcomes:

- Real-time cash flow visibility (15-minute latency)

- Proactive liquidity management

- 40% reduction in working capital stress

- $200K monthly savings from optimized cash positioning

- Infrastructure cost: $35K/month (justified by ROI)

Example 2: Manufacturing Unified Reporting

Challenge:

Data scattered across SAP (on-prem), Excel files, and mobile apps (Bizom). No single source of truth. Manual reporting consumes 50+ hours weekly.

Single-Cloud Architecture (Azure End-to-End):

`SAP + Excel + Mobile Apps

Azure Data Factory (ETL + Hybrid Connectivity)

Azure Synapse (Data Warehouse)

PowerBI (Management Dashboards)

Why Single-Cloud?

- Existing Microsoft enterprise agreement

- SAP hybrid connectivity easier in Azure

- PowerBI native integration eliminates data movement

- Team already Azure-certified

Technology Stack:

- ETL: Azure Data Factory

- Storage: Azure Synapse

- Orchestration: Azure Logic Apps

- BI: PowerBI

Outcomes:

- Single source of truth for manufacturing operations

- Real-time production visibility

- 50% reduction in reporting time (from 50 hours to 25 hours weekly)

- Eliminated Excel-based manual reporting errors

- Infrastructure cost: $18K/month

Lesson:

Multi-cloud isn’t always the answer. Deep ecosystem integration sometimes trumps multi-cloud flexibility.

Example 3: Retail Demand Forecasting Across Clouds

Challenge:

SKU-level demand prediction across 200+ stores for inventory optimization. Reducing both stockouts and overstock.

Hybrid Architecture:

Point-of-Sale Systems

AWS Glue (Batch Ingestion)

S3 Data Lake (AWS)

NexML Forecasting (Deployed on Azure ML)

REST APIs → Store Ordering Systems

Why Hybrid?

- POS data already flowing to AWS (existing investment)

- ML expertise concentrated in Azure team

- No need to move raw data (process in AWS, replicate aggregations only)

Technology Stack:

- Ingestion: AWS Glue

- Storage: AWS S3 + Delta Lake

- Transformation: Spark on EMR

- ML: NexML on Azure ML

- API: Azure Functions

Outcomes:

- 30% reduction in stockouts

- 25% reduction in overstock

- Optimized working capital (freed $2.3M in tied inventory)

- ROI: 12x in first year

- Infrastructure cost: $28K/month

Cost Optimization:

Data stayed in AWS (avoided egress fees). Only aggregated features sent to Azure for ML, saving $12K/month in data transfer costs.

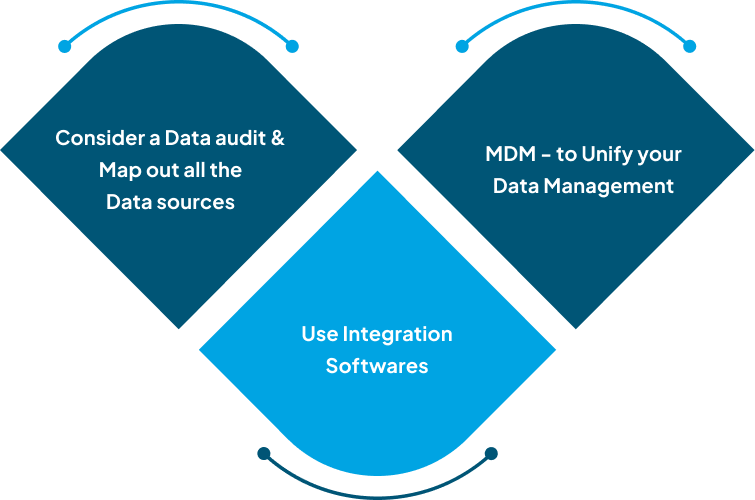

Build Your Multi-Cloud Pipeline: The 3-Phase Approach

Every successful multi cloud pipeline follows the same implementation pattern. Here’s the roadmap we use at Innovatics:

Phase 1: Foundation (Weeks 1-4)

Goal:

Get data flowing from source to destination.

Tasks:

- Choose storage layer (Snowflake for cloud-agnostic, BigQuery for GCP analytics, or Synapse for Azure ecosystem)

- Implement basic batch ingestion (start with Airbyte for quick wins, add cloud-native tools as needed)

- Set up orchestration (Airflow or Prefect, deploy managed service like MWAA or Cloud Composer)

- Create first simple pipeline (source → warehouse, no transformation yet)

Deliverables:

- Data moving reliably from source systems to warehouse

- Basic monitoring in place

- Team trained on chosen tools

- Documentation of architecture decisions

Estimated Cost:

$5-10K/month for mid-size enterprise

Team Required:

2 data engineers + 1 architect

Phase 2: Transformation & Automation (Weeks 5-8)

Goal:

Make data useful for business decisions.

Tasks:

- Choose storage layer (Snowflake for cloud-agnostic, BigQuery for GCP analytics, or Synapse for Azure ecosystem)

- Implement basic batch ingestion (start with Airbyte for quick wins, add cloud-native tools as needed)

- Build business logic transformations (KPIs, metrics, aggregations)

- Set up orchestration (Airflow or Prefect, deploy managed service like MWAA or Cloud Composer)

- Create first simple pipeline (source → warehouse, no transformation yet)

Deliverables:

- Data moving reliably from source systems to warehouse

- Basic monitoring in place

- Team trained on chosen tools

- Documentation of architecture decisions

Estimated Cost:

$5-10K/month for mid-size enterprise

Team Required:

2 data engineers + 1 architect

Phase 2: Transformation & Automation (Weeks 5-8)

Goal:

Make data useful for business decisions.

Tasks:

- Implement DBT (create staging models, build dimension and fact tables, add data quality tests)

- Build business logic transformations (KPIs, metrics, aggregations)

- Set up CI/CD (GitHub Actions or GitLab CI, automated testing, deployment pipelines)

- Create initial dashboards (PowerBI, Tableau, or Looker with core business metrics)

Deliverables:

- Analytics-ready datasets with proper data models

- Automated workflows with version control

- Self-service BI for business users

- Documented transformation logic

Estimated Cost:

+$10-15K/month (storage growth + compute for transformations)

Team Required:

Team Required: +1 analytics engineer (now 3-4 people total)

Phase 3: Reliability & Governance (Weeks 9-12)

Goal:

Production-grade enterprise system.

Tasks:

- Implement comprehensive testing (Great Expectations, integration tests, regression tests)

- Set up observability (Grafana dashboards, automated alerting with PagerDuty/Slack, incident runbooks)

- Configure data lineage (OpenLineage integration, documentation generation)

- Establish governance (access controls with IAM, data classification automation, compliance reporting)

- Optimize costs (right-size resources, implement spot instances, establish data lifecycle policies)

Deliverables:

- Enterprise-ready pipeline with SLAs

- Comprehensive documentation and lineage

- Governance framework implemented

- Cost-optimized infrastructure

Estimated Cost:

Estimated Cost: +$5-10K/month (observability tools + governance platforms)

Team Required:

+1 platform engineer (now 4-5 people total)

Total 12-Week Investment

| Phase | Duration | Team Size | Monthly Cost |

|---|---|---|---|

| 1 – Foundation | 4 weeks | 3 people | $5–10K |

| 2 – Transformation | 4 weeks | 4 people | $15–25K |

| 3 – Reliability | 4 weeks | 5 people | $20–35K |

Ongoing:

$30-50K/month infrastructure + team costs

Alternative:

Partner with Innovatics for 40-50% faster implementation, lower risk, and proven frameworks.

7 Multi Cloud Pipeline Mistakes We’ve Seen (And Fixed)

Mistake 1: Ignoring Data Transfer Costs

- What Happened: Client moved 5TB daily between AWS and GCP for “real-time analytics.” Monthly egress fees: $75K.

- Fix: Process data where it lives. Replicate only aggregated results. New cost: $8K/month. Savings: $67K monthly.

Mistake 2: No Testing Strategy

- What Happened: Schema change in production broke downstream ML models. Incorrect predictions led to $500K in overbought inventory.

- Fix: Automated testing in CI/CD. Schema changes are now caught in the staging environment before production deployment.

Mistake 3: Skipping Data Lineage

- What Happened: Regulatory audit required complete data flow documentation. 3 engineers spent 6 weeks manually reconstructing lineage. Cost: $180K in lost productivity.

- Fix: OpenLineage from day one. Auto-generated audit trails. Next audit: passed in 2 days.

Mistake 4: Over-Engineering

- What Happened: Built a real-time Kafka pipeline for batch analytics that ran once daily. Infrastructure cost: $40K/month. Actual requirement: daily batch processing.

- Fix: Replaced with Airflow + batch ingestion. New cost: $5K/month. Savings: $35K monthly.

Mistake 5: Vendor Lock-In (Accidentally)

- What Happened: Used cloud-specific features everywhere (AWS Lambda with proprietary triggers, Azure-specific SDKs). Migration cost estimate when consolidating: $2M+.

- Fix: Abstraction layers. Portable code. Terraform for infrastructure. Migration now possible in 8 weeks instead of 8 months.

Mistake 6: No Governance Framework

- What Happened: PII exposure in the analytics warehouse was discovered during an audit. GDPR fine: €500K. Reputational damage: immeasurable.

- Fix: Automated PII detection at ingestion. Access controls from day one. Audit logging for all data access. Zero incidents since implementation.

Mistake 7: Reactive Monitoring

- What Happened: Discovered pipeline failures from angry business users. “Where’s my morning report?” Average detection time: 4 hours after failure.

- Fix: Proactive monitoring with SLA-based alerts. Automated remediation for common failures. New detection time: 3 minutes. Business users no longer first to know about failures.

Takeaway:

Every mistake is avoidable with proper planning, but planning without experience is guesswork, and this is where partners like Innovatics add value.

Build Your Multi Cloud Pipeline the Right Way

Multi cloud data pipelines offer unmatched flexibility, resilience, and cost optimization when architected correctly. The key is understanding that multi-cloud isn’t about using every cloud for everything. It’s about strategically placing data and workloads where they perform best while maintaining governance and observability across platforms.

Success comes down to three things: choosing the right architecture pattern for your needs, implementing reliability from day one with testing and monitoring, and having the expertise to navigate the complexity. The difference between a pipeline that becomes technical debt and one that becomes a competitive advantage is in the details, and the SLA definitions, the failure handling logic, the governance automation, the cost optimization tactics.

The framework we’ve outlined here, and 6 architectural layers, practical tool selection, 3-phase implementation, and governance at scale, which has been proven across finance, retail, manufacturing, and pharma at Innovatics.

Ready to build or modernize your multi cloud data pipeline?

At Innovatics, we’ve architected multi-cloud data platforms that deliver:

- 40% faster model deployment with NexML AutoML

- 30% lower infrastructure costs through optimization

- Enterprise-grade reliability with 99.5%+ uptime

- Full compliance across NCUA, APRA, and GDPR frameworks

We’ve built the payment gateway cash flow system, processing millions of transactions daily. The manufacturing unified reporting eliminates 25 hours of manual work weekly. The retail demand forecasting saving $2.3M in tied inventory.

Talk to our data engineering team about your multi-cloud challenges

We’ll assess your current architecture, design the right solution for your specific requirements, and implement it with proven frameworks that balance speed, cost, and reliability. No vendor marketing, no over-engineering, just practical solutions that work.

Frequently Asked Questions

A multi-cloud data pipeline is a system that collects, processes, and moves data across more than one cloud platform such as AWS, Microsoft Azure, and Google Cloud. Companies adopt this approach to improve reliability, reduce dependence on a single cloud vendor, and take advantage of specialized services offered by different platforms. By distributing workloads across clouds, organizations can also improve disaster recovery and maintain business continuity when one cloud service experiences disruptions.

A multi cloud data pipeline improves reliability by distributing workloads and data processing tasks across multiple cloud environments instead of relying on a single infrastructure provider. If one cloud service fails or experiences downtime, the pipeline can continue operating through another cloud platform. This architecture also supports scalability because organizations can process large volumes of data across different cloud infrastructures without overloading a single system.

Many organizations use orchestration and transformation tools to manage multi cloud pipelines effectively. Apache Airflow is widely used to schedule and coordinate workflows across cloud platforms, while DBT is commonly used for managing data transformations and modeling. Data integration platforms such as Airbyte or Fivetran help move data between systems, and cloud-agnostic analytics platforms like Snowflake or Databricks allow teams to process data consistently across multiple environments.

Implementing a multi cloud data pipeline introduces several operational challenges. Teams must manage different authentication systems, APIs, and governance rules for each cloud platform. Monitoring pipelines also becomes more complex because logs and metrics may exist in separate environments. In addition, transferring large volumes of data between clouds can increase infrastructure costs if the architecture is not carefully planned.

A multi-cloud strategy is usually beneficial for organizations that process large volumes of data, operate in regulated industries, or require strong disaster recovery capabilities. Companies with global operations often choose multi-cloud infrastructure to meet regional data compliance rules and maintain high availability. However, smaller organizations with simpler workloads may find a single-cloud architecture easier to manage and more cost-effective.

Neil Taylor

January 23, 2026Meet Neil Taylor, a seasoned tech expert with a profound understanding of Artificial Intelligence (AI), Machine Learning (ML), and Data Analytics. With extensive domain expertise, Neil Taylor has established themselves as a thought leader in the ever-evolving landscape of technology. Their insightful blog posts delve into the intricacies of AI, ML, and Data Analytics, offering valuable insights and practical guidance to readers navigating these complex domains.

Drawing from years of hands-on experience and a deep passion for innovation, Neil Taylor brings a unique perspective to the table, making their blog an indispensable resource for tech enthusiasts, industry professionals, and aspiring data scientists alike. Dive into Neil Taylor’s world of expertise and embark on a journey of discovery in the realm of cutting-edge technology.